The problem

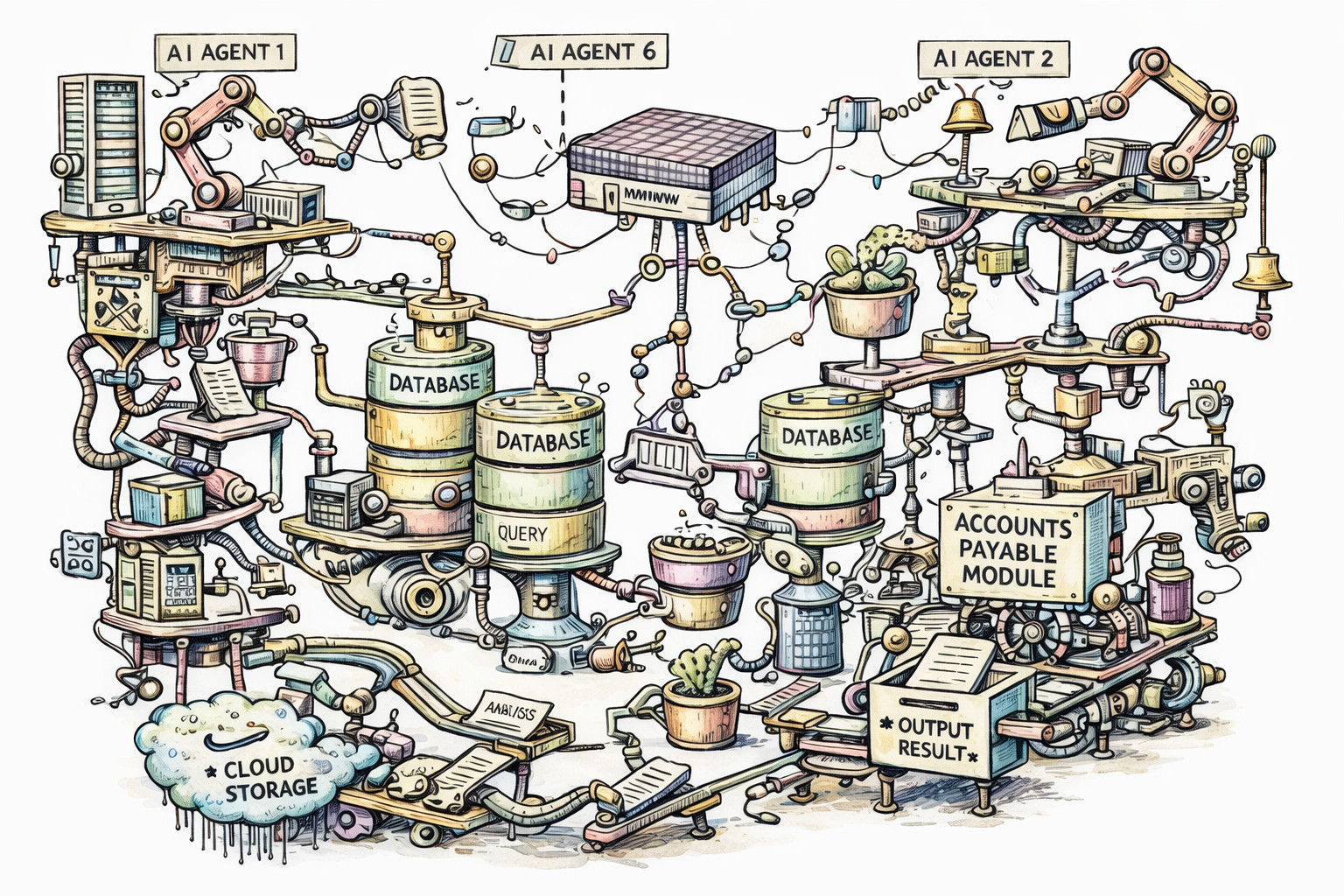

AI and agentic development are powerful force multipliers, allowing teams to do more with less. There is a risk, however, that many small agent-led changes gradually produce systems that are functional, but fragile. The knowledge of why the final system is shaped the way it is can end up living nowhere, because no human ever held the whole thing in their head. These systems can start to resemble Rube Goldberg machines:

Rube Goldberg was an American cartoonist famous for drawings of absurdly over-engineered machines that performed simple tasks through a chain of unnecessarily complicated steps. The machines worked, they just worked in a deeply convoluted way.

Why could this happen?

This risk is not unique to AI. Incremental software delivery has always had a tendency to optimise locally and drift globally. Agentic development just increases the speed and scale at which that can happen.

In an all-human team, new features are usually added with some regard for what is already there. Good teams often carry cross-domain knowledge and enough architectural context to notice when the whole system is starting to wobble. They get an uneasy feeling when code starts to smell. That kind of complexity minimisation requires judgment, not just technical capability.

By contrast, when an agent takes a ticket from spec to go-live, it is usually optimised to complete the task successfully, not to minimise long-term system complexity. It rarely steps back and asks whether the whole approach should be rethought. Agents do not get that uneasy architectural feeling, they focus on satisfying the task and passing the available checks.

A Rube Goldberg machine is not built in a single ticket. It forms gradually over time, largely because the path of least resistance for an agent is additive. When something does not work, it adds a wrapper, a retry, a duplicate table, or a translation layer. Without a holistic view, that is how a contraption emerges.

So what can we do?

Can’t we just specify more?

Specs written by humans are often under-specified in the ways that matter most here. They describe what much more readily than how. An agent filling in the gaps can make locally reasonable decisions that become a contraption when viewed globally, especially across tickets written by different people at different times.

Asking an agent in prose to “always consider the high-level architecture” might help, but it can just as easily be ignored. What helps more is turning architectural intent into checks that fail loudly.

Add guardrails

Guardrails are automated checks that enforce constraints on what an agent can produce. These are not suggestions in prose, they are hard failures that block progress until resolved. A guardrail does not get forgotten or quietly deprioritised. It fails the build. Guardrails in prose invite being completely ignored. This is evident in the sad tale of a production database being deleted, where the AI chose to ignore every single prose guideline it was given.

In practice, guardrails might look like:

- Complexity limits: a CI step that fails if a single PR touches more than a set number of files, or introduces more than a set number of new abstractions.

- Architecture fitness functions: automated tests that verify structural rules, such as “no module in layer A may import from layer C” or “all database access must go through the repository layer”.

- Critic agents: a second agent whose sole job is to review the output of the first, specifically asking “does this need to exist?” rather than merely “does this work?”.

- Refactor gates: a rule that triggers a mandatory human architecture review after every N agent-written tickets ship, before the next batch can begin.

The key property of a guardrail is that it makes unwanted complexity a first-class failure condition rather than an afterthought. If the agent’s solution is too complicated, it does not merge. The agent must either find a simpler approach or escalate to a human.

Guardrails help, but they do not remove the need for architectural ownership. Someone still needs to care about the shape of the system.

Does it even matter?

You could argue that this kind of technical debt does not always matter, and in some cases that is true. We may be entering a strange period in which some forms of technical debt become cheaper to rewrite or paper over, because AI reduces the cost of change.

Even so, for live and business-critical systems, that can be a risky bet. The faster a team can ship complexity, the easier it is to create a system that nobody can safely change.

Summary

The risk is not simply that AI agents write bad code. It is that they can produce locally good changes that accumulate into globally incoherent systems. The velocity of agentic development means teams can now build very large, very tangled contraptions before anyone notices. The defence is to put guardrails in place that make architectural drift and unnecessary complexity first-class failure conditions.