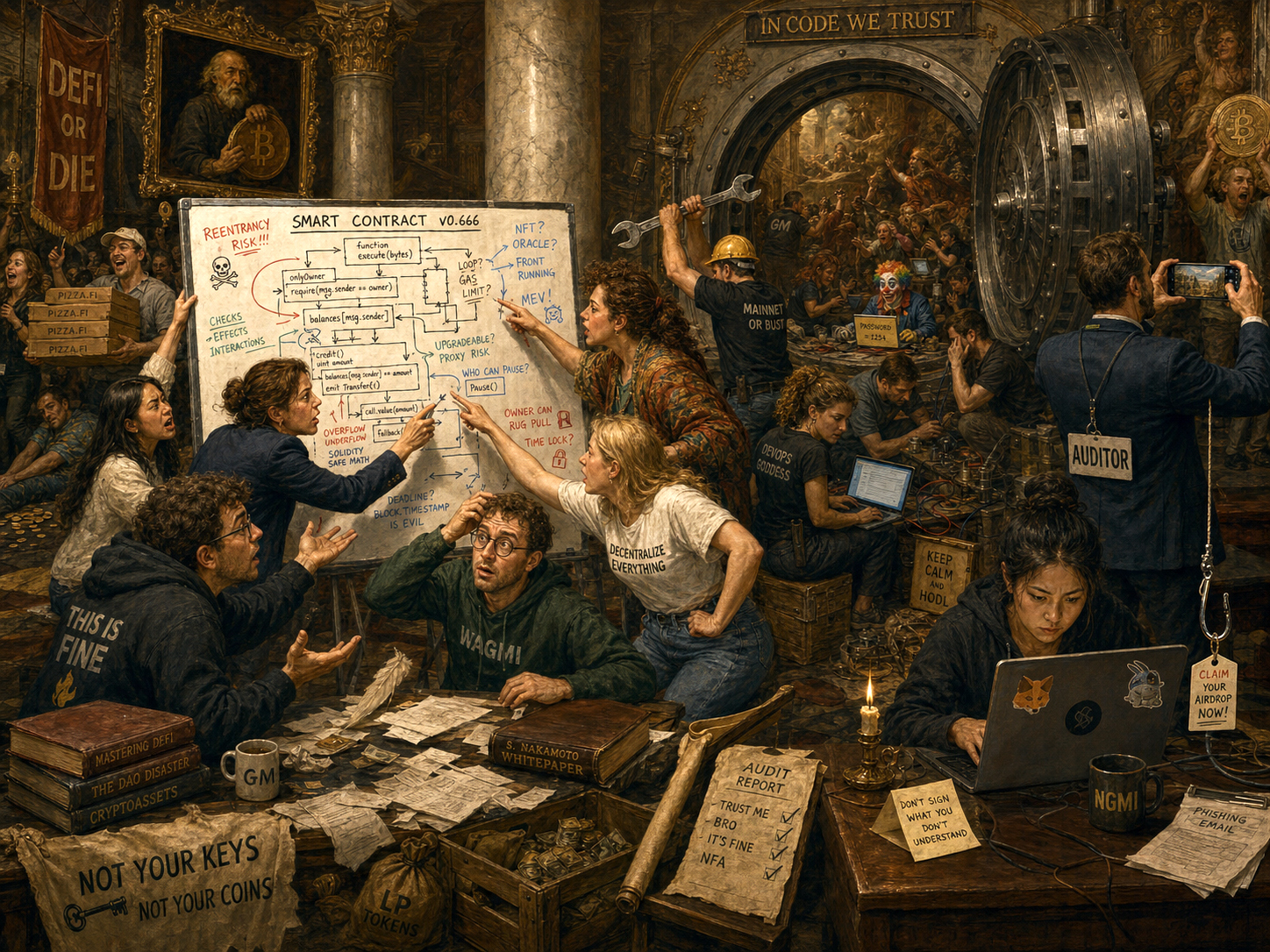

When people talk about smart contract security, they often think of things like code quality and audits. In this post I propose that individual Solidity code faults are, in 2026, largely a solved problem. What is less solved, and considerably more dangerous, is everything else. Smart contract risk is layered, and the layers above the code are where the serious problems still lie.

This post maps out four layers of vulnerability: code-level, composability-level, human-level, and external. Each has its own threat model, its own mitigations, and its own failure modes. The transitions between the levels are often fuzzy and overlapping, and sophisticated attacks span multiple layers. The core point of this post is that code is not the most important layer any more.

There are excellent resources for code-level and composability-level vulnerabilities. The OWASP Smart Contract Top 10 shows what vulnerabilities have been fashionable over the last couple of years. The Ethereum Enterprise Alliance has a useful specification outlining security considerations that should be made for smart contracts. These are useful but are not the full story. Let’s walk through the four layers of vulnerability.

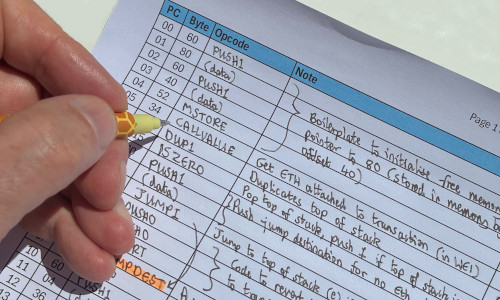

1. Code-level Vulnerabilities

The bugs that can live inside a single contract are well-understood, well-documented, and increasingly well-mitigated. Developers, auditors, and tooling are all better than they were when Ethereum launched.

Reentrancy is a classic. A contract calls out to an external address before updating its own state, and the external address calls back in before the first execution completes. The result is that the contract’s state is stale during the callback, which can cause havoc. The fixes (checks-effects-interactions, mutexes) are standard practice.

Over- and underflows were a serious hazard in Solidity before 0.8.0, when arithmetic silently wrapped around. A balance that should have reached zero would instead wrap to 2^256. OpenZeppelin’s SafeMath library was the canonical fix. Solidity 0.8.0 made SafeMath unnecessary here by building in overflow checks.

Poor access control covers a broad category. Functions that should be owner-only but are not, admin roles that are never revoked, initialiser functions left callable after deployment. A missing onlyOwner modifier is embarrassingly simple to overlook and has cost projects millions.

Unbounded loops do not steal funds directly, but they can make a contract permanently non-functional. A loop that iterates over a list that grows with usage will eventually hit the block gas limit and brick the contract.

Delegate calls to untrusted contracts are a subtler hazard. delegatecall executes foreign code in the context of the calling contract’s storage. If the logic contract is upgradeable or untrusted, the storage layout can be corrupted or overwritten entirely.

Mitigations at this layer

The good news is that these vulnerabilities are pretty much solved by good engineering discipline today. OpenZeppelin provides battle-tested base contracts, Hardhat and Foundry make testing straightforward. There are mature static analysis tools and there is an excellent audit industry. Bug bounty programmes and the opportunity to buy exploit insurance provide a backstop. None of this is perfect, but code-level issues are far less likely to surprise a careful team than they were even five years ago.

What is perfect, within its limited scope, is formal verification. For teams that need the highest assurance, formal verification tools such as Halmos can mathematically prove that code invariants hold across every possible function input. They require significant additional effort but deliver mathematical certainty.

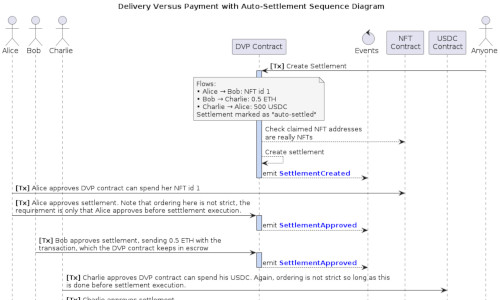

2. Composability-level Vulnerabilities

This layer is where contracts call other contracts, either within a single protocol or across the wider DeFi ecosystem. Composability creates risk. When protocols call other protocols, and those protocols call yet others, the attack surface is not a single contract. It is a graph of interacting systems with emergent behaviour that no single audit can capture easily.

Sandwich attacks exploit the fact that the public mempool is, well… public. An attacker sees your pending swap transaction, places a buy order immediately before it (pushing the price up), lets your transaction execute at a worse price, then sells immediately after. The attacker profits from the price impact your trade creates.

Oracle manipulation is a very popular attack. Protocols that rely on on-chain price feeds, particularly those using DEX spot prices, are vulnerable to attackers temporarily distorting the price through a large trade or flash loan. The protocol reads the manipulated price and makes decisions (lending, liquidation thresholds) based on bad data. Time-weighted average prices and multi-source aggregation are the standard defences.

MEV (Maximal Maliciously Extractable Value) is where block proposers can reorder, insert, or censor transactions within a block. At the benign end this is arbitrage. At the hostile end it is front-running and sandwich attacks at scale.

Whale-controlled governance is a governance-layer risk. When token voting determines protocol upgrades, a sufficiently large holder, or a coalition, can pass proposals that benefit themselves at the expense of other users.

Mitigations at this layer

Fuzzing tools (Echidna, Medusa) catch emergent state violations that unit tests miss. Foundry also has invariant fuzzing built in, making it accessible if you are already using it for testing. Threat modelling (sitting down and systematically asking “what can an adversary do if they control this input?”) is a valuable technique. Audits can focus on contract interactions, not just the safety of single contracts. Bug bounties provide mitigation in this layer too. To avoid sandwich attacks and MEV, there is the option to not use the public mempool, and instead use MEV Blocker services, essentially private mempools.

As protocols become more cross-chain and more deeply composed, this layer gets harder, not easier.

3. Human-level Vulnerabilities

This layer covers human and operational risk. Where the previous layers involve code and systems, this one is about the people who design, build, deploy, and run the protocol and the processes around them.

OpSec failures around key management are perennial. Lost keys, compromised keys, keys that were “just temporarily” on a hot machine. For any meaningful treasury or multisig, keys should not exist on any networked device. Institutional wallet key solutions change the threat model considerably, I wrote an example of such a service previously.

Phishing and social engineering targeting team members, particularly those with signing authority, are increasingly sophisticated. Attackers research their targets, spoof credible communications, and create urgency. Bybit is a high-profile case that involved multiple vulnerability types, including social engineering and a supply-chain attack. NCC Group’s technical post-mortem is a thorough account of how it unfolded.

Faulty business logic is hard to catch in testing. Imagine a system built to deliver certain business logic, and that business logic is fundamentally wrong, say a pricing algorithm. All your tests will pass as green to successfully show you’re delivering that business logic, but that business logic is not correct.

Corruption and coercion are real risks for projects that are sufficiently valuable and whose key holders are publicly known. The “wrench attack” is not just a meme.

Poor audit processes are common. Projects skip audits due to cost or timeline pressure, audit only part of their codebase, or add “tiny fixes” to the audited code and put that amended code live instead. Contracts that are out of scope (multisigs, bridges, peripheral contracts) are often where the actual exploit lands.

Launch-targeted scams consistently target the moment of maximum attention: token launches, high-profile protocol announcements, airdrops. The larger the event, the more sophisticated the social engineering campaign surrounding it.

Address poisoning deserves a mention. An attacker sends tokens from a carefully crafted address that resembles a known address (matching prefix and suffix) with the goal of polluting your transaction history. When you next go to copy an address from your history, you select the poisoned one. (I have written about this in more detail previously.)

Mitigations at this layer

Hardware wallets and a good quality multi-signature approval process for transaction signing. Security training for the whole team, not just engineers. Thorough audit scoping. If you have processes involving bridges, they are in audit scope. Response readiness matters here too: drills, documented incident response runbooks, and relationships with legal counsel. SEAL-911 is a free, round-the-clock emergency hotline staffed by vetted white-hat security researchers.

4. External Vulnerabilities

The final layer covers risks that originate outside your project, outside your immediate control, yet can still destroy your protocol.

Stablecoin depeg or collapse is a real thing. Terra/LUNA showed that foundational DeFi infrastructure can simply stop existing, and every protocol depending on it goes with it. Protocols built on top of stablecoins inherit that counterparty risk.

Supply-chain attacks target the developer toolchain itself: npm packages, build scripts, CI/CD pipelines, compromised dependencies. An attacker who can modify your build artefact before deployment wins regardless of how good your Solidity is. The KelpDAO exploit in April 2026, in which attackers replaced go-ethereum node binaries to approve a fraudulent cross-chain transfer, is a recent example of this class.

Nation-state and advanced persistent threats are no longer theoretical. Nation-state actors have pulled off multi-stage attacks combining technical exploits with social engineering, and the resources behind them are substantial.

Reputational risk is technically external but practically devastating. An exploit, even one from which funds are fully recovered, can permanently damage the credibility of a protocol. Recovery speed and communication quality matter as much as the technical response.

Mitigations at this layer

On-chain monitoring (Hypernative, Forta, and similar) can catch anomalous behaviour early enough to pause contracts before an exploit completes. Secure development practices throughout the supply chain (dependency pinning, reproducible builds, CI artefact signing) reduce the surface for supply-chain compromise. Response readiness: drills, pre-agreed pause procedures, relationships with exchanges and other protocols for coordinated response.

The Takeaway

Smart contract vulnerabilities are not a single problem. They are four overlapping problems:

| Layer | Character | Primary mitigations |

|---|---|---|

| Code-level | Well-understood | Good engineering, audits, OZ, testing |

| Composability-level | Emergent, growing with DeFi complexity | Fuzzing, threat modelling, audits |

| Human-level | Social, organisational | Key hygiene, training, audit scope discipline |

| External | Systemic, sometimes uncontrollable | Monitoring, response readiness, supply-chain hygiene |

The code-level layer has historically got the most attention. The human-level and external layers are where equally costly failures happen. A team that is rigorous about all four is doing real security work.

AI and vulnerability detection

A trend worth watching across all four layers is, unsurprisingly, AI. A recent a16z report explains how AI is becoming very good at identifying smart contract vulnerabilities, including composability-level bugs that are harder for humans to trace manually, but it is still not great at exploiting them unaided. The same capability that helps defenders scan for vulnerabilities is also lowering the bar for attackers.